Recently while winding down from a long day I flipped the channel and “The Goonies†was on. I left it there thinking an old movie I’d seen a dozen times would put me to sleep quickly. As it turns out I quickly got back into it. By the time the gang hit the wishing well and Mikey gave his speech I was inspired to write a blog, this one in particular. “Cause it’s their time – their time up there. Down here it’s our time, it’s our time down here.â€

This got me thinking about data center network overlays, and the physical networks that actually move the packets some Network Virtualization proponents have dubbed “underlays.†The more I think about it, the more I realize that it truly is our time down here in the “lowly underlay.†I don’t think there’s much argument around the need for change in data center networking, but there is a lot of debate on how. Let’s start with their time up there “Network Virtualization.â€

Network Virtualization

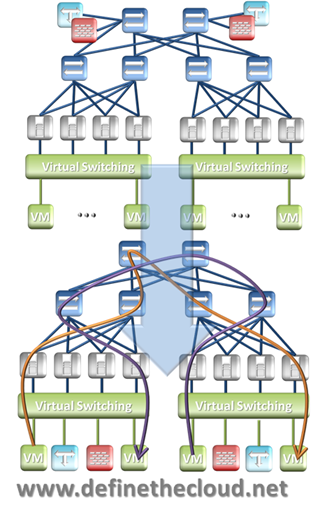

Unlike server virtualization, Network Virtualization doesn’t partition out the hardware and separate out resources. Network Virtualization uses server virtualization to virtualize network devices such as: switches, routers, firewalls and load-balancers. From there it creates virtual tunnels across the physical infrastructure using encapsulation techniques such as: VxLAN, NVGRE and STT. The end result is a virtualized instantiation of the current data center network in x86 servers with packets moving in tunnels on physical networking gear which segregate them from other traffic on that gear. The graphic below shows this relationship.

Network Virtualization in this fashion can provide some benefits in the form of: provisioning time and automation. It also induces some new challenges discussed in more detail here: What Network Virtualization Isn’t (be sure to read the comments for alternate view points.) What network virtualization doesn’t provide, in any form, is a change to the model we use to deploy networks and support applications. The constructs and deployment methods for designing applications and applying policy are not changed or enhanced. All of the same broken or misused methodologies are carried forward. When working with customers to begin virtualizing servers I would always recommend against automated physical to virtual server migration, suggesting rebuild in a virtual machine instead.

The reason for that is two fold. First server virtualization was a chance to re-architect based on lessons learned. Second, simply virtualizing existing constructs is like hiring movers to pack your house along with dirt/cobwebs/etc. then move it all to the new place and unpack. The smart way to move a house is donate/yard sale what you won’t need, pack the things you do, move into a clean place and arrange optimally for the space. The same applies to server and network virtualization.

Faithful replication of today’s networking challenges as virtual machines with encapsulation tunnels doesn’t move the bar for deploying applications. At best it speeds up, and automates, bad practices. Server virtualization hit the same challenges. I discuss what’s needed from the ground up here: Network Abstraction and Virtualization: Where to Start?. Software only network virtualization approaches are challenged by both restrictions of the hardware that moves their packets and issues with methodology of where the pain points really are. It’s their time up there.

Underlays

The physical transport network which is minimalized by some as the “underlay†is actually more important in making a shift to network programmability, automation and flexibility. Even network virtualization vendors will agree, to some extent, on this if you dig deep enough. Once you cut through the marketecture of “the underlay doesn’t matter†you’ll find recommendations for a non-blocking fabric of 10G Access ports and 40G aggregation in one design or another. This is because they have no visibility into congestion and no control of delivery prioritization such as QoS.

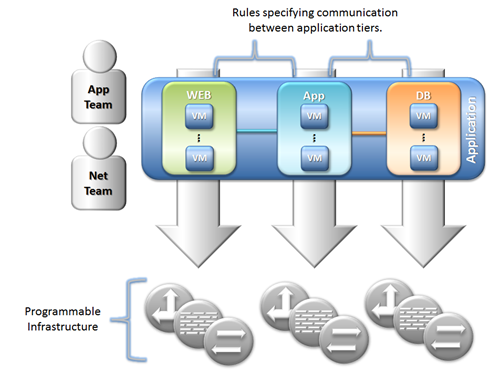

Additionally Network Virtualization has no ability to abstract the constructs of VLAN, Subnet, Security, Logging, QoS from one another as described in the link above. To truly move the network forward in a way that provides automation and programmability in a model that’s cohesive with application deployment, you need to include the physical network with the software that will drive it. It’s our time down here.

By marrying physical innovations that provide a means for abstraction of constructs at the ground floor with software that can drive those capabilities, you end up with a platform that can be defined by the architecture of the applications that will utilize it. This puts the infrastructure as a whole in a position to be deployed in lock-step with the applications that create differentiation and drive revenue. This focus on the application is discussed here: Focus on the Ball: The Application. The figure below, from that post, depicts this.

The advantage to this ground up approach is the ability to look at applications as they exist, groups of interconnected services, rather than the application as a VM approach. This holistic view can then be applied down to an infrastructure designed for automation and programmability. Like constructing a building, your structure will only be as sound as the foundation it sits on.

For a little humor (nothing more) here’s my comic depiction of Network Virtualization.

I think you miss the point entirely. After years of waiting upon the network, the servers have developed a more agile and responsive implementation of the same. Therefore, servers no longer need to wait for the next overhaul, acquisition, or deployment. Update the software and get on with business.

Networking is becoming like the department of transportation. Lay the roads, establish the lanes, and let the traffic manage its destination and flow. With network virtualization, the end state will be when software (applications or infrastructure) dynamically configures physical assets (switches, routers, firewalls) with policies to support service delivery.

Your comic should read “server team hands packet to network team and says take this to port 42.”

I agree that networking has been slow to move forward into automation and rapid deployment while servers, storage, etc. have made leaps and bounds. There are several reasons for this, not the least of which is the importance of the network and the fact that it touches ALL other systems.

Your arguments are based on the woulds, coulds and shoulds of network virtualization rather than today’s reality. Today network virtualization places a visibility barrier between the encapsulated traffic and the network that transports it. This can lead to issues with troubleshooting and congestion. Additionally it adds a disparate layer of management requiring separate tools and skill sets to manage the virtual traffic from the physical.

Equally as importantly Network Virtualization is only applicable to virtualized workloads today. There is no cohesive toolset for physical workloads. There is also no ability to provide the same functionality to PaaS workloads. If it’s not an application in a VM it gets a separate management model and set of features. Even if it’s an application in a VM it gets separate management and features if it’s a non-supported hypervisor or overlay technique. Bridging and routing between disparate overlays is not possible and stitching back to VLANs is kludge.

The end goal should definitely be software controlled infrastructure that is configured dynamically and programmatically. This shouldn’t be retraining developers to understand network terms and flows and having them program “take this to port 42.†This should be a policy driven model in which application requirements and connectivity are defined and mapped and then automatically applied to the infrastructure at the touch points the application exists on.

Recreating the physical network in a set of VMs and slapping on an overlay doesn’t move the needle forward, it simply provides a hack to allow for automation and speed of deployment for broken practices.

Joe

I agree, this discussion is datacenter focused and does not address other networking needs. However, when dealing with SND and Virtual Networking we are addressing specific use cases which tend to be highly standardized toward commodity hardware or virtualization.

While I do reference future architectures, I also speak from real deployments. These are architectures have flat physical networks (1-2 tiers) with a single consumable IP space (management network and backend not included) bordered by virtual routers and firewalls. All server instances operate behind this “physical” network with virtual segmentation, filtering, and load balancing. The physical network provides interconnects between virtual routers and the internet.

I agree with your other points to a degree, especially when managing virtual + physical servers the SDN/overlay solution breaks down. At the same time, PaaS is enabled through virtual overlays in many instances.

Also, if the network has progressed slowly due to the number and complexity of its connects, shouldn’t we simplify and standardize to the greatest degree? Why add numerous management points, standards, protocols, and auditors?

Does the physical network need high levels of visibility when its task is streamlined to transport and delivery? If routing/firewalling/load balancing/inspection is offloaded to virtual solutions, the IP header with QOS tagging should be sufficient for the network.

Another interesting discussion recently pointed toward the physical network becoming another systems bus. I see SDN/overlays headed this direction.

Useful info. Fortunate me I discovered your web site by

chance, and I’m stunned why this coincidence didn’t happened in advance!

I bookmarked it.

It’s going to be ending of mine day, except before end I am reading this fantastic article to improve my knowledge.

It’s a shame you don’t have a donate button!

I’d most certainly donate to this superb blog! I guess for now i’ll settle for bookmarking and adding your RSS feed to my Google account.

I look forward to brand new updates and will talk about this

blog with my Facebook group. Talk soon!

Every weekend i used to pay a visit this website, as i wish for enjoyment, as

this this web site conations actually good funny

information too.

I loved as much as you will receive carried out right here.

The sketch is tasteful, your authored material stylish.

nonetheless, you command get got an nervousness over that you wish be delivering the following.

unwell unquestionably come more formerly again as exactly the same nearly very often inside case

you shield this increase.

It’s an remarkable piece of writing for all the internet visitors; they will get benefit from it I am sure.

I don’t even understand how I finished up right here, but I believed

this post was good. I do not recognize who you’re but definitely you are going to a well-known blogger if

you aren’t already. Cheers!

Lovely just what I was looking for. Thanks to the author for taking his

time on this one.