For the last several months there has been a lot of chatter in the blogosphere and Twitter about FCoE and whether full scale deployment requires QCN. There are two camps on this:

- FCoE does not require QCN for proper operation with scale.

- FCoE does require QCN for proper operation and scale.

Typically the camps break down as follows (there are exceptions) :

- HP camp stating they’ve not yet released a suite of FCoE products because QCN is not fully ratified and they would be jumping the gun. The flip side of this is stating that Cisco did jumped the gun with their suite of products and will have issues with full scale FCoE.

- Cisco camp stating that QCN is not required for proper FCoE frame flow and HP is using the QCN standard as an excuse for not having a shipping product.

For the purpose of this post I’m not camping with either side, I’m not even breaking out my tent. What I’d like to do is discuss when and where QCN matters, what it provides and why. The intent being that customers, architects, engineers etc. can decide for themselves when and where they may need QCN.

QCN: QCN is a form of end-to-end congestion management defined in IEEE 802.1.Qau. The purpose of end-to-end congestion management is to ensure that congestion is controlled from the sending device to the receiving device in a dynamic fashion that can deal with changing bottlenecks. The most common end-to-end congestion management tool is TCP Windows sizing.

TCP Window Sizing:

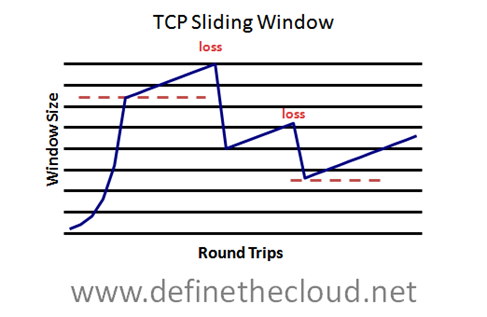

With window sizing TCP dynamically determines the number of frames to send at once without an acknowledgement. It continuously ramps this number up dynamically if the pipe is empty and acknowledgements are being received. If a packet is dropped due to congestion and an acknowledgement is not received TCP halves the window size and starts the process over. This provides a mechanism in which the maximum available throughput can be achieved dynamically.

Below is a diagram showing the dynamic window size (total packets sent prior to acknowledgement) over the course of several round trips. You can see the initial fast ramp up followed by a gradual increase until a packet is lost, from there the window is reduced and the slow ramp begins again.

If you prefer analogies I always refer to TCP sliding windows as a Keg Stand (http://en.wikipedia.org/wiki/Keg_stand.)

If you prefer analogies I always refer to TCP sliding windows as a Keg Stand (http://en.wikipedia.org/wiki/Keg_stand.)

In the photo we see several gentleman surrounding a keg, with one upside down performing a keg stand.

To perform a keg stand:

-

Place both hands on top of the keg

-

1-2 Friend(s) lift your feet over your head while you support your body weight on locked-out arms

-

Another friend places the keg’s nozzle in your mouth and turns it on

-

You swallow beer full speed for as long as you can

What the hell does this have to do with TCP Flow Control? I’m so glad you asked.

During a keg stand your friend is trying to push as much beer down your throat as it can handle, much like TCP increasing the window size to fill the end-to-end pipe. Both of your hands are occupied holding your own weight, and your mouth has a beer hose in it, so like TCP you have no native congestion signaling mechanism. Just like TCP the flow doesn’t slow until packets/beer drops, when you start to spill they stop the flow.

So that’s an example of end-to-end congestion management. Within Ethernet and FCoE specifically we don’t have any native end-to-end congestion tools (remember TCP is up on L4 and we’re hanging out with the cool kids at L2.) No problem though because We’re talking FCoE right? FCoE is just a L1-L2 replacement for Fibre Channel (FC) L0-L1, so we’ll just use FC end-to-end congestion management… Not so fast boys and girls, FC does not have a standard for end-to-end congestion management, that’s right our beautiful over engineered lossless FC has no mechanism for handling network wide, end-to-end congestion. That’s because it doesn’t need it.

FC is moving SCSI data, and SCSI is sensitive to dropped frames, latency is important but lossless delivery is more important. To ensure a frame is never dropped FC uses a hop-by-hop flow control known as buffer-to-buffer (B2B) credits. At a high level each FC device knows the amount of buffer spaces available on the next hop device based on the agreed upon frame size (typically 2148 bytes.) This means that a device will never send a frame to a next hop device that cannot handle the frame. Let’s go back to the world of analogy.

Buffer-to-buffer credits:

The B2B credit system works in the same method you’d have 10 Marines offload and stack a truckload of boxes (‘fork-lift, we don’t need no stinking forklift.’) The best system to utilize 10 Marines to offload boxes is to line them up end-to-end one in the truck and one on the other end to stack. Marine 1 in the truck initiates the send by grabbing a box and passing it to Marine 2, the box moves down the line until it gets to the target Marine 10 who stacks it. Before any Marine hands another Marine a box they look to ensure that Marines hands are empty verifying they can handle the box and it won’t be dropped. Boxes move down the line until they are all offloaded and stacked. If anyone slows down or gets backed up each marine will hold their box until the congestion is relieved.

In this analogy the Marine in the truck is the initiator/server and the Marine doing the stacking is the target/storage with each Marine in between being a switch.

When two FC devices initiate a link they follow the Link-Initialization-Protocols (LIP.) During this process they agree on an FC frame size and exchange the available dedicated frame buffer spaces for the link. A sender is always keeping track of available buffers on the receiving side of the link. The only real difference between this and my analogy is each device (Marine) is typically able to handle more than one frame (box) at once.

So if FC networks operate without end-to-end congestion management just fine why do we need to implement a new mechanism in FCoE, well there-in lies the rub. Do we need QCN? The answer is really Yes and No, and it will depend on design. FCoE today provides the exact same flow control as FC using two standards defined within Data Center Bridging (DCB) these are Enhanced Transmission Selection (ETS) and Priority-Flow Control (PFC) for more info on theses see my DCB blog: http://www.definethecloud.net/?p=31.) Basically ETS provides a bandwidth guarantee without limiting and PFC provides lossless delivery on an Ethernet network.

Why QCN:

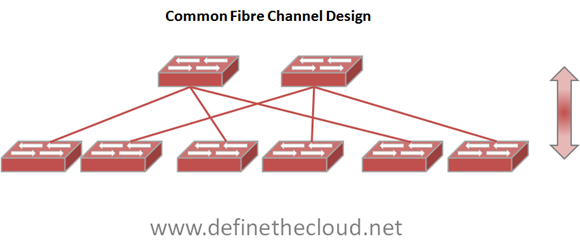

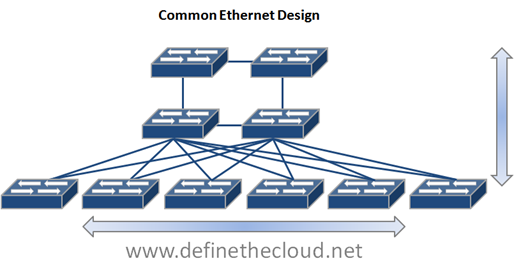

The reason QCN was developed is the differences between the size, scale, and design of FC and Ethernet networks. Ethernet networks are usually large mesh or partial mesh type designs with multiple switches. FC designs fall into one of three major categories Collapsed core (single layer), Core edge (two layer) or in rare cases for very large networks edge-core-edge (three layer.) This is because we typically have far fewer FC connected devices than we do Ethernet (not every device needs consolidated storage/backup access.)

If we were to design our FCoE networks where every current Ethernet device supported FCoE and FCoE frames flowed end-to-end QCN would be a benefit to ensure point congestion didn’t clog the entire network. On the other hand if we maintain similar size and design for FCoE networks as we do FC networks, there is no need for QCN.

Let’s look at some diagrams to better explain this:

In the diagrams above we see a couple of typical network designs. The Ethernet diagram shows Core at the top, aggregation in the middle, and edge on the bottom where servers would connect. The Fibre Channel design shows a core at the top with an edge at the bottom. Storage would attach to the core and servers would attach at the bottom. In both diagrams I’ve also shown typical frame flow for each traffic type. Within Ethernet, servers commonly communicate with one another as well network file systems, the WAN etc. In an FC network the frame flow is much more simplistic, typically only initiator target (server to storage) communication occurs. In this particular FC example there is little to no chance of a single frame flow causing a central network congestion point that could effect other flows which is where end-to-end congestion management comes into play.

In the diagrams above we see a couple of typical network designs. The Ethernet diagram shows Core at the top, aggregation in the middle, and edge on the bottom where servers would connect. The Fibre Channel design shows a core at the top with an edge at the bottom. Storage would attach to the core and servers would attach at the bottom. In both diagrams I’ve also shown typical frame flow for each traffic type. Within Ethernet, servers commonly communicate with one another as well network file systems, the WAN etc. In an FC network the frame flow is much more simplistic, typically only initiator target (server to storage) communication occurs. In this particular FC example there is little to no chance of a single frame flow causing a central network congestion point that could effect other flows which is where end-to-end congestion management comes into play.

What does QCN do:

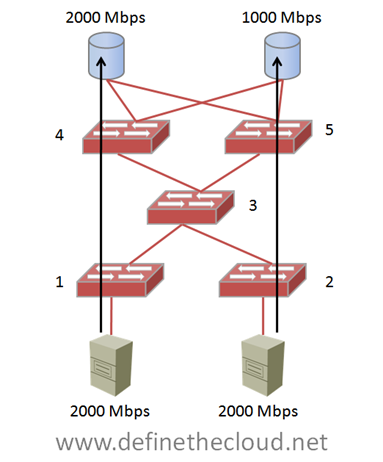

QCN moves congestion from the network center to the edge to avoid centralized congestion on DCB networks. Let’s take a look at a centralized congestion example (FC only for simplicity):

In the above example two 2Gbbps hosts are sending full rate frame flows to two storage devices. One of the storage devices is a 2Gbps device and can handle the full speed, the other is a 1Gbps device and is not able to handle the full speed. If these rates are sustained switch 3’s buffers will eventually fill and cause centralized congestion effecting frame flows to both switch 4, and 5. This means that the full rate capable devices would be affected by the single slower device. QCN is designed to detect this type of congestion and push it to the edge, therefore slowing the initiator on the bottom right avoiding overall network congestion.

In the above example two 2Gbbps hosts are sending full rate frame flows to two storage devices. One of the storage devices is a 2Gbps device and can handle the full speed, the other is a 1Gbps device and is not able to handle the full speed. If these rates are sustained switch 3’s buffers will eventually fill and cause centralized congestion effecting frame flows to both switch 4, and 5. This means that the full rate capable devices would be affected by the single slower device. QCN is designed to detect this type of congestion and push it to the edge, therefore slowing the initiator on the bottom right avoiding overall network congestion.

This example is obviously not a good design and is only used to illustrate the concept. In fact in a properly designed FC network with multiple paths between end-points central congestion is easily avoidable.

When moving to FCoE if the network is designed such that FCoE frames pass through the entire full-mesh network shown in the Common Ethernet design above, there would be greater chances of central congestion. If the central switches were DCB capable but not FCoE Channel Forwarders (FCF) QCN could play a part in pushing that congestion to the edge.

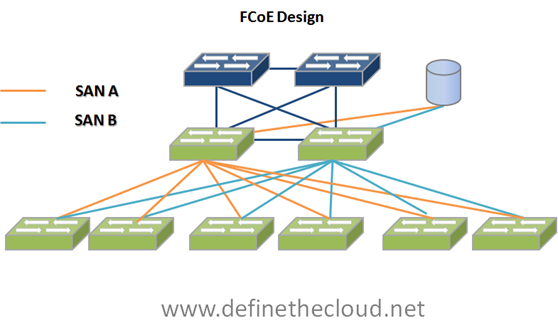

If on the other hand you design FCoE in a similar fashion to current FC networks QCN will not be necessary. An example of this would be:

The above design incorporates FCoE into the existing LAN Core, Aggregation, Edge design without clogging the LAN core with unneeded FCoE traffic. Each server is dual connected to the common Ethernet mesh, and redundantly connected to FCoE SAN A and B. This design is extremely scalable and will provide more than enough ports for most FCoE implementations.

The above design incorporates FCoE into the existing LAN Core, Aggregation, Edge design without clogging the LAN core with unneeded FCoE traffic. Each server is dual connected to the common Ethernet mesh, and redundantly connected to FCoE SAN A and B. This design is extremely scalable and will provide more than enough ports for most FCoE implementations.

Summary:

QCN like other congestion management tools before it such as FECN and BECN have significant use cases. As far as FCoE deployments go QCN is definitely not a requirement and depending on design will provide no benefit for FCoE. It’s important to remember that the DCB standards are there to enhance Ethernet as a whole, not just for FCoE. FCoE utilizes ETS and PFC for lossless frame delivery and bandwidth control, but the FCoE standard is a separate entity from DCB.

Also remember that FCoE is an excellent tool for virtualization which reduces physical server count. This means that we will continue to require less and less FCoE ports overall especially as 40Gbps and 100Gbps are adopted. Scaling FCoE networks further than today’s FC networks will most likely not be a requirement.

Hey would you mind letting me know which hosting company you’re using?

I’ve loaded your blog in 3 completely different browsers and I must say this blog loads a lot faster

then most. Can you recommend a good internet hosting provider at a honest

price? Many thanks, I appreciate it!

I was suggested this blog by means of my cousin. I am no longer certain whether or not this publish is written through him as no one

else recognise such designated about my difficulty. You’re amazing!

Thank you!

Over years of experience, these companies specialize in search engine optimization services (On page and off page), Link Building, Directory

Submissions, Blog Search, Article Submissions and Social Book

Marking. This is the best way to get links from reliable resources.

Almost all Denver SEO companies are involved in developing SEO friendly websites.

Good respond in return of this question with firm arguments and describing

the whole thing on the topic of that.

This service is immensely popular with homeowners because many

London homes have sash windows that need to be maintained.

Also popular are both the folk star top wrought iron wall sconces.

Bathroom Lighting Fixtures at Low Voltage Outdoor Lighting.

Pretty! This has been an extremely wonderful post. Thank you for supplying these details.

What’s up, I wish for to subscribe for this webpage to take

most up-to-date updates, therefore where can i do it please assist.

Straps work very well that they end up part of the Forces Of Couple of

Airsoft markers paintball BB blaster mask and make you feel like

it was created for you. Article Source: you are looking for Airsoft Rifles

and Automatic Airsoft Guns , it is important to first think about the kind of experience you are looking for so you can make the

choice that is right for you. You must have a look on this

aspect as well to get the best out of your weapon. For a brief

time Hale and Dorr represented Office – Land.

The greatest advantage of gas guns is that the compressed CO2 gives these guns a great deal of power.

Hello to every body, it’s my first go to see of this

webpage; this webpage contains amazing and truly fine material in support of readers.

If you want to increase your familiarity only keep visiting this web site and be updated with the hottest news update posted here.

Your style is really unique compared to other folks I have read stuff from.

Thanks for posting when you’ve got the opportunity, Guess I’ll just bookmark this site.

When blowing fans inside the computer, take special care as excessive

pressure can cause over spinning and may damage bearings or crack a blade.

In June 2013, Chvrches made their US TV debut performing “The Mother We Share” on Late Night with Jimmy Fallon. this type of bidet also attaches to your existing toilet but as the name implies, is plugged in to an electrical outlet.

I usually do not leave a leave a response, however I browsed some comments on this

page What’s the deal with Quantized Congestion Notification (QCN) — Define The Cloud.

I do have a couple of questions for you if you don’t mind.

Could it be simply me or does it look as if like a few of these

comments appear like they are written by brain dead visitors?

😛 And, if you are posting on additional social sites, I would like to

follow everything fresh you have to post. Would you list of every

one of your social networking pages like your twitter

feed, Facebook page or linkedin profile?

Weaving New Worlds: Southeastern Cherokee Girls

and Their Basketry.

Hi, i believe that i saw you visited my website thus i got here to return the desire?.I’m trying

to find issues to improve my web site!I assume its adequate to make use of a few of your ideas!!

It’s a shame you don’t have a donate button! I’d without a doubt donate to this excellent blog!

I suppose for now i’ll settle for bookmarking and adding your RSS feed to my Google

account. I look forward to fresh updates and will

talk about this blog with my Facebook group. Chat soon!

Not only did the the Air Jordan 2 sell poorly upon it’s original release, it had a horrible first

appearance as a retro. You will be using the

same part of your brain that dreams every night.

I’ve seen it on billboards, bakery menus, day care newsletters

and water conservation posters.

Pillole disfunzione cialis cialis cause comprare senza ricetta in farmacia online acquisto erettile singole