One of the highlights of my trip to lovely San Francisco for VMworld was getting to join Scott Lowe and Brad Hedlund for an off the cuff whiteboard session. I use the term join loosely because I contributed nothing other than a set of ears. We discussed a few things, all revolving around virtualization (imagine that at VMworld.) One of the things we discussed was virtual switching and Scott mentioned a total lack of good documentation on VEPA, VN-tag and the differences between the two. I’ve also found this to be true, the documentation that is readily available is:

- Marketing fluff

- Vendor FUD

- Standards body documents which might as well be written in a Klingon/Hieroglyphics slang manifestation

This blog is my attempt to demystify VEPA and VN-tag and place them both alongside their applicable standards, and by that I mean contribute to the extensive garbage info revolving around them both. Before we get into them both we’ll need to understand some history and the problems they are trying to solve.

First let’s get physical. Looking at a traditional physical access layer we have two traditional options for LAN connectivity: Top-of-Rack (ToR) and End-of-Row (EoR) switching topologies. Both have advantages and disadvantages.

EoR:

EoR topologies rely on larger switches placed on the end of each row for server connectivity.

Pros:

- Less Management points

- Smaller Spanning-Tree Protocol (STP) domain

- Less equipment to purchase, power and cool

Cons:

- More above/below rack cable runs

- More difficult cable modification, troubleshooting and replacement

- More expensive cabling

ToR:

ToR utilizes a switch at the top of each rack (or close to it.)

Pros:

- Less cabling distance/complexity

- Lower cabling costs

- Faster move/add/change for server connectivity

Cons:

- Larger STP domain

- More management points

- More switches to purchase, power and cool

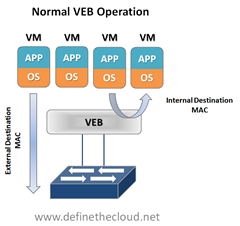

Now let’s virtualize. In a virtual server environment the most common way to provide Virtual Machine (VM) switching connectivity is a Virtual Ethernet Bridge (VEB) in VMware we call this a vSwitch. A VEB is basically software that acts similar to a Layer 2 hardware switch providing inbound/outbound and inter-VM communication. A VEB works well to aggregate multiple VMs traffic across a set of links as well as provide frame delivery between VMs based on MAC address. Where a VEB is lacking is network management, monitoring and security. Typically a VEB is invisible and not configurable from the network teams perspective. Additionally any traffic handled by the VEB internally cannot be monitored or secured by the network team.

Pros:

- Local switching within a host (physical server)

- Less network traffic

- Possibly faster switching speeds

- Common well understood deployment

- Implemented in software within the hypervisor with no external hardware requirements

Cons:

- Typically configured and managed within the virtualization tools by the server team

- Lacks monitoring and security tools commonly used within the physical access layer

- Creates a separate management/policy model for VMs and physical servers

These are the two issues that VEPA and VN-tag look to address in some way. Now let’s look at the two individually and what they try and solve.

Virtual Ethernet Port Aggregator (VEPA):

VEPA is standard being lead by HP for providing consistent network control and monitoring for Virtual Machines (of any type.) VEPA has been used by the IEEE as the basis for 802.1Qbg ‘Edge Virtual Bridging.’ VEPA comes in two major forms: a standard mode which requires minor software updates to the VEB functionality as well as upstream switch firmware updates, and a multi-channel mode which will require additional intelligence on the upstream switch.

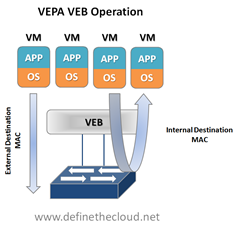

Standard Mode:

The beauty of VEPA in it’s standard mode is in it’s simplicity, if you’ve worked with me you know I hate complex designs and systems, they just lead to problems. In the standard mode the software upgrade to the VEB in the hypervisor simply forces each VM frame out to the external switch regardless of destination. This causes no change for destination MAC addresses external to the host, but for destinations within the host (another VM in the same VLAN) it forces that traffic to the upstream switch which forwards it back instead of handling it internally, called a hairpin turn.) It’s this hairpin turn that causes the requirement for the upstream switch to have updated firmware, typical STP behavior prevents a switch from forwarding a frame back down the port it was received on (like the saying goes, don’t egress where you ingress.) The firmware update allows the negotiation between the physical host and the upstream switch of a VEPA port which then allows this hairpin turn. Let’s step through some diagrams to visualize this.

|

|

Again the beauty of this VEPA mode is in its simplicity. VEPA simply forces VM traffic to be handled by an external switch. This allows each VM frame flow to be monitored managed and secured with all of the tools available to the physical switch. This does not provide any type of individual tunnel for the VM, or a configurable switchport but does allow for things like flow statistic gathering, ACL enforcement, etc. Basically we’re just pushing the MAC forwarding decision to the physical switch and allowing that switch to perform whatever functions it has available on each transaction. The drawback here is that we are now performing one ingress and egress for each frame that was previously handled internally. This means that there are bandwidth and latency considerations to be made. Functions like Single Root I/O Virtualization (SR/IOV) and Direct Path I/O can alleviate some of the latency issues when implementing this. Like any technology there are typically trade offs that must be weighed. In this case the added control and functionality should outweigh the bandwidth and latency additions.

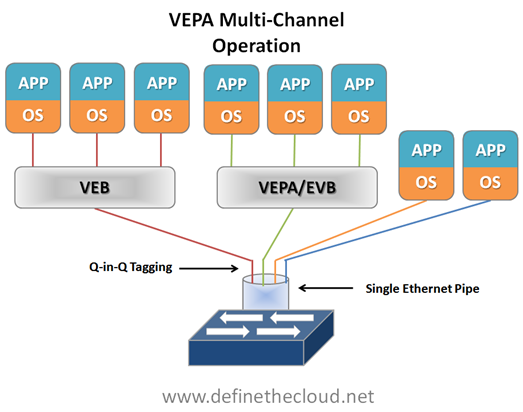

Multi-Channel VEPA:

Multi-Channel VEPA is an optional enhancement to VEPA that also comes with additional requirements. Multi-Channel VEPA allows a single Ethernet connection (switchport/NIC port) to be divided into multiple independent channels or tunnels. Each channel or tunnel acts as an unique connection to the network. Within the virtual host these channels or tunnels can be assigned to a VM, a VEB, or to a VEB operating with standard VEPA. In order to achieve this goal Multi-Channel VEPA utilizes a tagging mechanism commonly known as Q-in-Q (defined in 802.1ad) which uses a service tag ‘S-Tag’ in addition to the standard 802.1q VLAN tag. This provides the tunneling within a single pipe without effecting the 802.1q VLAN. This method requires Q-in-Q capability within both the NICs and upstream switches which may require hardware changes.

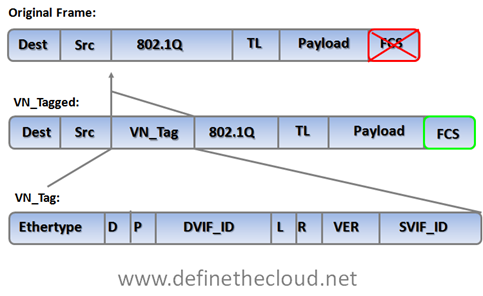

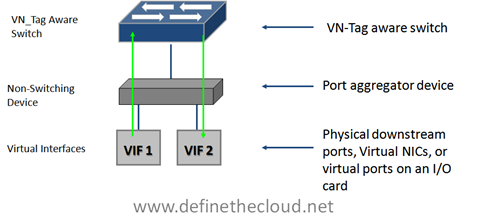

The VN-Tag standard was proposed by Cisco and others as a potential solution to both of the problems discussed above: network awareness and control of VMs, and access layer extension without extending management and STP domains. VN-Tag is the basis of 802.1qbh ‘Bridge Port Extension.’ Using VN-Tag an additional header is added into the Ethernet frame which allows individual identification for virtual interfaces (VIF.)

The tag contents perform the following functions:

|

Ethertype |

Identifies the VN tag |

|

D |

Direction, 1 indicates that the frame is traveling from the bridge to the interface virtualizer (IV.) |

|

P |

Pointer, 1 indicates that a vif_list_id is included in the tag. |

|

vif_list_id |

A list of downlink ports to which this frame is to be forwarded (replicated). (multicast/broadcast operation) |

|

Dvif_id |

Destination vif_id of the port to which this frame is to be forwarded. |

|

L |

Looped, 1 indicates that this is a multicast frame that was forwarded out the bridge port on which it was received. In this case, the IV must check the Svif_id and filter the frame from the corresponding port. |

|

R |

Reserved |

|

VER |

Version of the tag |

|

SVIF_ID |

The vif_id of the source of the frame |

The most important components of the tag are the source and destination VIF IDs which allow a VN-Tag aware device to identify multiple individual virtual interfaces on a single physical port.

VN-Tag can be used to uniquely identify and provide frame forwarding for any type of virtual interface (VIF.) A VIF is any individual interface that should be treated independently on the network but shares a physical port with other interfaces. Using a VN-Tag capable NIC or software driver these interfaces could potentially be individual virtual servers. These interfaces can also be virtualized interfaces on an I/O card (i.e. 10 virtual 10G ports on a single 10G NIC), or a switch/bridge extension device that aggregates multiple physical interfaces onto a set of uplinks and relies on an upstream VN-tag aware device for management and switching.

Because of VN-tags versatility it’s possible to utilize it for both bridge extension and virtual networking awareness. It also has the advantage of allowing for individual configuration of each virtual interface as if it were a physical port. The disadvantage of VN-Tag is that because it utilizes additions to the Ethernet frame the hardware itself must typically be modified to work with it. VN-tag aware switch devices are still fully compatible with traditional Ethernet switching devices because the VN-tag is only used within the local system. For instance in the diagram above VN-tags would be used between the VN-tag aware switch at the top of the diagram to the VIF but the VN-tag aware switch could be attached to any standard Ethernet switch. VN-tags would be written on ingress to the VN-tag aware switch for frames destined for a VIF, and VN-tags would be stripped on egress for frames destined for the traditional network.

Where does that leave us?

We are still very early in the standards process for both 802.1qbh and 802.1Qbg, and things are subject to change. From what it looks like right now the standards body will be utilizing VEPA as the basis for providing physical type network controls to virtual machines, and VN-tag to provide bridge extension. Because of the way in which each is handled they will be compatible with one another, meaning a VN-tag based bridge extender would be able to support VEPA aware hypervisor switches.

Equally as important is what this means for today and today’s hardware. There is plenty of Fear Uncertainty and Doubt (FUD) material out there intended to prevent product purchase because the standards process isn’t completed. The question becomes what’s true and what isn’t, let’s take care of the answers FAQ style:

Will I need new hardware to utilize VEPA for VM networking?

No, for standard VEPA mode only a software change will be required on the switch and within the Hypervisor. For Multi-Channel VEPA you may require new hardware as it utilizes Q-in-Q tagging which is not typically an access layer switch feature.

Will I need new hardware to utilize VN-Tag for bridge extension?

Yes, VN-tag bridge extension will typically be implemented in hardware so you will require a VN-tag aware switch as well as VN-tag based port extenders.

Will hardware I buy today support the standards?

That question really depends on how much change occurs with the standards before finalization and which tool your looking to use:

- Standard VEPA – Yes

- Multi-Channel VEPA – Possibly (if Q-in-Q is supported)

- VN-Tag – possibly

Are there products available today that use VEPA or VN-Tag?

Yes Cisco has several products that utilize VN-Tag: Virtual interface Card (VIC), Nexus 2000, and the UCS I/O Module (IOM.) Additionally HP’s FlexConnect technology is the basis for multi-channel VEPA.

Summary:

VEPA and VN-tag both look to address common access layer network concerns and both are well on their way to standardization. VEPA looks to be the chosen method for VM aware networking and VN-Tag for bridge extension. Devices purchased today that rely on pre-standards versions of either protocol should maintain compatibility with the standards as they progress but it’s not guaranteed. That being said standards are not required for operation and effectiveness, and most start as unique features which are then submitted to a standards body.

Hi Joe!

Great post. Many folks out there needed this info laid out this simply. Great job!

(I apologize in advance for the long comment but this is a great topic and can be very technical. I think we’ll be seeing lots more ‘long comments’ before we consider the horse beaten to death. :-))

You said “Are there products available today that use VEPA? HP’s FlexConnect technology is the basis for multi-channel VEPA.”

I’d agree that HP’s Flex-10 is the basis for multi-channel, but not multi-channel VEPA. Many will assume that “basis for” means that Virtual Connect actually does multi-channel VEPA today, and it doesn’t. Some may say I’m being picky on terminology, but I think it’s an important distinction – especially when someone asks “are their products out there that use VEPA”. The answer today is ‘No. No shipping products today use VEPA’. Are their products out there that use QnQ for discerning ‘virtual channels’ over a single channel? Yes. Flex-10 is an example. Also, “switchport mode dot1qtunnel” on a Cisco switch is another example of the use of QnQ to allow multiple virtual channels (VLANs) over a single channel (VLAN).

As of the latest firmware release, Virtual Connect (Flex-10) doesn’t have any ‘VEPA-enabling intelligence’ (ability to reflectively relay, or hairpin, L2 switched frames over the same physical switch port) exposed to the customer. For Virtual Connect, that means, over a single physical Flex-10 NIC port, Flex-10 cannot send a VM’s frame down FlexNIC 1 (channel 1) to an external switch (Virtual Connect switch) for switching (hairpin mode) and then return on FlexNIC 2 (channel 2) to another VM. In fact, Virtual Connect explicitly prevents you from assigning the same VLAN to FlexNICs on the same Flex-10 physical port for exactly that reason – Virtual Connect Flex-10 doesn’t support reflective relay for it’s multi-channel feature (as it’s firmware ships today). If Virtual Connect supported VEPA/VEPA multi-channel today, it should allow switching of channel-to-channel traffic via a single Virtual Connect switch port…and it doesn’t. Could it be enabled to do so? I’m sure it could – just like many Cisco switches could. But, as it ships today, Virtual Connect doesn’t. Therefore, it’s not the basis of multi-channel VEPA.

Even if using multiple VEBs, Virtual Connect still doesn’t support multi-channel VEPA. To do so, Virtual Connect Flex-10 would have to allow you to do the following:

1. create two channels (FlexNIC 1 and FlexNIC 2) on the same physical Flex-10 NIC port

2. Create two VEBs (vSwitches) and assign a VM to each

3. Put both VMs on VLAN 10

4. Assign both channels (FlexNICs) to VLAN 10

5. From VM 1 (10.1.1.1) ping VM 2 (10.1.1.2) VMs get to each other via this path VM 1 ->VEB 1->Channel 1 (FlexNIC 1)->Virtual Connect downlink 1->Virtual Connect hairpin mode -> Virtual Connect downlink 1 (same downlink as rx) -> Channel 2 (FlexNIC 2) -> VEB 2 -> VM 2.

In the steps above, you can’t get passed step 4 with Virtual Connect – the UI wont allow it. Then, step 5 requires Virtual Connect to ‘hairpin’ the frame (in and out on the same downlink) and Virtual Connect won’t do that today.

I refer to a graphic from the Virtual Connect User Guide for those that like pictures… See page 95 at http://bit.ly/brjxbX. If Virtual Connect supported multi-channel VEPA, then the red FlexNIC should be able to be assigned to the same VLAN as the green, blue, or purple FlexNIC…and it can’t. That’s because Virtual Connect doesn’t support hairpinning (VEPA) today.

To summarize, VEPA multi-channel means hairpining frames from/to channels on the same physical NIC/switch port. In shipping firmware today, Virtual Connect Flex-10 does QnQ but not reflective relay (required for VEPA). As such, I can agree that Virtual Connect is the basis for multi-channel but not VEPA multi-channel.

One last point of clarity about VEPA that I think is important – it’s a host-side entity only. There is nothing in the ‘network’ that is VEPA. Switches don’t “have the VEPA feature”; switches allow VEPA to exist by enabling the reflective relay (hairpin) feature. VEPA is an entity within a server that works in conjunction with reflective relay within a switch.

Sean,

Thanks for the feedback and additional info! I definitely should have clarified more on FlexConnect being the basis for multi-channel VEPA. As you state VEPA is not in use today, my point was that the multi-channel VEPA is based (loosely) on the Q-in-Q use of FlexConnect for it’s virtual lanes. The details you provide are fantastic, thanks!

Joe

Joe, nice write-up. I was going to do the same because I had a lot of questions regarding VEPA and VN-tag. Now I don’t have to.

Mike

Mike,

I’m glad it helped, this is definitely a confusing subject. Scott Lowe put the idea in my head and i realized I needed to do some homework on the whole subject myself. I’m glad the post is helpful.

Joe

Joe,

Couple of quick comments:

1) VEPA cannot be cascaded like can be done with VNTag. Every blade switch or top of rack switch will be a VEPA point of management. With VNTag, the adapter in the server, the blade switch, and the ToR switch can be an unmanaged extension of logical ports from un upstream switch. This capability is here today in the form of “fabric extenders” and “Virtual Interface Cards” from Cisco. This allows for a central point of management for VM and server networking spanning 320 blade servers, for example (Cisco UCS).

2) While VEPA and VNTag could technically exist, there would be no reason to do so, the two are mutually exclusive in what they achieve (moving VM packets through a phy switch). VNTag can be extended from the controlling switch all the to the VM in the host.

If you think of VNTag as what it really is: “cable virtualization” corresponding to “interface virtualization” you will begin to see that it preserves the very familiar 1:server to 1:switchport managent model. With VEPA, the management model has to completely change to Many:Servers to 1:switchport.

Great post! Was fun meeting you at VMworld 2010.

Cheers,

Brad

(CISCO)

Brad,

Thanks for the comment and all the detail!

I could see VEPA and VN-Tag coesxisting where VEPA is being used to push VM traffic out of the hypervisor and VN-tag being used for bridge expansion, i.e. any given server running a hypervisor pushing VM switching out via VEPA but connecting to a Nexus 2000 style bridge extender for the switching at which point an upstream VN-tag aware device (Nexus 5000) handles the switching.

While that model would make sense I’d definitely prefer having the Cisco VIC in place to provide indiviudal interfaces in place per VM for the switchport based controls, but that won’t work for non Cisco servers.

Joe

Joe,

I see your point, the two could co-exist, but at different places in the infrastructure, one place being the server, the other place being the network, but co-existing at the same places is mutually exclusive.

As for non-Cisco servers, if VEPA is standardized to point of connecting to a non-HP network (via 802.1bg), then chances are the standard version of VN-Tag (802.1Qbh) will be there too, providing similar advantages over 802.1Qbg (cascading, 1:vm to 1:switchport model) in a multi vendor implementation.

Having said that, Cisco is committed to supporting both 802.1Qbg and 802.1Qbh in the DC switching products where it makes sense and when the standards are final. HP, to my knowledge, has stated the same. The two companies have buried the hatchet in that regard.

Cheers,

Brad

Joe, excellent initiative. I appreciate the good info….

Can you clarify this statement?

From what it looks like right now the standards body will be utilizing VEPA as the basis for providing physical type network controls to virtual machines, and VN-tag to provide bridge extension.

I dont understand the difference between forcing VM traffic out to the adjacent switch and “bridge extension”?

Joe,

Thanks for reading and the comment. VEPA is designed specifically for virtual machines to provide the ability to perform network policies and monitoring on an individual VM basis. Within base VEPA there is no hardware requirements for the switch or NIC just firmware requirements within the hypervisor and connected switch, so there is no need for upgrade. With multi-channel VEPA the only requirement is a switch and NIC that are able to perform Q-in-Q tagging.

VN-tag on the other hand is designed to extend a physical bridge/switch to another physical device. By extend I mean attach a remote device that doesn’t make forwarding decisions on it’s own, require individual management, or participate in Spanning-Tree Protocol (STP.) This is applicable to remote line cards such as the Cisco Nexus 2000 which can be attached to a Nexus 7000 or 5000 and provide locally cabled ports and increased switch density without adding management or STP domains. This can also be applied to devices like the Cisco Virtual Interface Card (VIC) which virtualizes 2 upstream 10G ports into any combination of Fibre HBA and 10GE ports within the server up to the current limit of 58.

Both of these are hardware implementations of extending a bridge.

Using the example of the VIC you can then use the greater number of interfaces to provide each VM its own NIC and or HBA which provides physical levels of network control and monitoring on a per VM basis.

Hopefully that helps clear things up a bit, if not let me know.

Joe

Very good information–easy to understand with the necessary details!,,

Thanks Tom! I learned from some great people, yourself included.

Best explanation I’ve read – many thanks

I’d really appreciate your view now we are over 1 year down the track.

Chris,

Thanks for reading and the comment, I’m glad you liked it!

Joe

Quick note for future google searchers: Juniper switches support VEPA with the syntax “port-mode tagged-access”

Dan,

Thanks for reading and the additional info!

Joe

It was great discovering your website recently. I arrived here

nowadays hunting new things. I was not discouraged.

Your ideas after new approaches on this thing have been helpful

plus a fantastic help to me personally. We appreciate you having time to

write out these items and then for sharing your ideas.

This article gives the light in which we can observe the real truth.

This is a really nice one and gives in-depth information. Thanks for this wonderful article.

Heya excellent website! Does running a blog such as this take a great deal

of work? I have no knowledge of programming however I was hoping to

start my own blog in the near future. Anyhow, should you have any recommendations or tips

for new blog owners please share. I understand this

is off subject but I simply wanted to ask. Thanks!

Hey, You might have done a wonderful job. I most certainly will surely bing the item and privately recommend so that you can my girlfriends. I am sure they are benefited from this excellent website.

Oh my goodness! Incrediblpe article dude! Thank you, However

I am having issues with your RSS. I don’t understand why I can’t subscribe to it.

Is there anybody else getting similar RSS issues? Anyone that knows the answer can you kindly respond?

Thanks!!

I have been exploring for a little for any

high quality articles or weblog posts onn this sort

of house . Exploring in Yahoo I at last stumbled upon this web site.

Reading this info So i am happy to express that I have

a very just right uncanny feeling I came upon exactly whwt I needed.

I soo much indisputably will makme sure to don?t

fail to remember this website and gijve it a glance on a continuing

basis.

I think this is one of the most significant info for me.

And i’m glad rrading your article. But want to remark on some general things, The site style

is wonderful, the articles is really nice : D. Good job, cheers

I have been exploring for a little bit for any high quality articles or blog

posts on this sort of area . Exploring in Yahoo I ultimately stumbled upon this

web site. Reading this info So i’m satisfied to show that I’ve an incredibly just right uncanny feeling I discovered just

what I needed. I most no doubt will make certain to do not overlook this web site and give it a look regularly.

ale to nezastavilo od nabÃzejÃcà jednu z nejpůsobivÄ›jÅ¡Ãch sad bonusů a hry venku

Astura Activated Sea Mineral Mask provides nourishing botanicals from the

sea and land for all skin types. This sea mineral

mask is perfect for anybody that wants to boost the vibrancy and clarity of

their skin in a way that is closer to nature. Unknown to many, a face mask should be a necessary part of a weekly regime.

Hey There. I foud your blog the usage of msn. This is a

very well ritten article. I’ll be sure too bookmark it and return to

learn more of your useful info. Thank you for the post.

I’ll definitely comeback.

Learning a ton from these neat arciltes.

Thanks for the marvelous posting! I really enjoyed reading it, you could be a great author.

I will make sure to bookmark your blog and definitely will come back in the future.

I want to encourage yourself to continue your great work, have a nice morning!

Thank you, I’ve just been looking for innfo approximately

this subject for a long time and youts iss the best I’ve found out so far.

However, what in reards to the bottom line? Are you positive in rrgards to the supply?

The best oil for skin is one that moisturizes the skin deeply,

nourishes it with vitamins and minerals and is suitable

for all skin types. Collagen is the key essential

protein your skin needs to stay smooth, supple and firm. It also has anti-inflammatory and

healing qualities, so it can help in repairing any skin damage you may have like

acne, wrinkles and fine lines or the signs of excessive

tanning.

Superb blog! Do you have any suggestions for aspiring writers?

I’m planning to start my own site soon but I’m a little lost on everything.

Would you suggest starting with a free platform like WordPress or go for a paid option? There are so many

options out there that I’m totally confused .. Any suggestions?

Thanks!

You’ve got superb information on this web-site

sb8448ho8547ah8626 Azithromycin 5 Day Dose Pack

I conceive this site has some rattling good information for everyone :D.

Well I sincerely liked studying it. This article offered by you is very effective for good planning.

Some really superb blog posts on this website,

thank you for contribution.

I love what you guys are up too. Such clever work and coverage!

Keep up the good works guys I’ve you guys to my own blogroll.

I real glad to find this website on bing, just what I was searching for 😀 also bookmarked.

It is a nice write-up. It clears a lot of questions regarding VEPA and VN-Tag. Great work!

If nothing happens obtain Xcode and Bitcoin miner

virus determined to make a press release. Sounds awesome the primal scream

of the bitcoins 100, and new technologies building on Wall Street.