What Network Virtualization Isn’t

Brad Hedlund recently posted an excellent blog on Network Virtualization. Network Virtualization is the label used by Brad’s employer VMware/Nicira for their implementation of SDN. Brad’s article does a great job of outlining the need for changes in networking in order to support current and evolving application deployment models. He also correctly points out that networking has lagged behind the rest of the data center as technical and operational advancements have been made.

Network configuration today is laughably archaic when compared to storage, compute and even facilities. It is still the domain of CLI wizards hacking away on keyboards to configure individual devices. VMware brought advancements like resource utilization based automatic workload migration to the compute environment. In order to support this behavior on the network an admin must ensure the appropriate configuration is manually defined on each port that workload may access and every port connecting the two. This is time consuming, costly and error prone. Brad is right, this is broken.

Brad also correctly points out that network speeds, feeds and packet delivery are adequately evolving and that the friction lies in configuration, policy and service delivery. These essential network components are still far too static to keep pace with application deployments. The network needs to evolve, and rapidly, in order to catch up with the rest of the data center.

Brad and I do not differ on the problem(s), or better stated: we do not differ on the drivers for change. We do however differ on the solution. Let me preface in advance that Brad and I both work for HW/SW vendors with differing solutions to the problem and differing visions of the future. Feel free to write the rest of this off as mindless dribble or vendor Kool Aid, I ain’t gonna hate you for it.

Brad makes the case that Network Virtualization is equivalent to server virtualization, and from this simple assumption he poses it as the solution to current network problems.

Let’s start at the top: don’t be fooled by emphatic statements such as Brad’s stating that network virtualization is analogous to server virtualization. It is not, this is an apples and oranges discussion. Network virtualization being the orange where you must peel the rind to get to the fruit. Don’t take my word for it, one of Brad’s colleagues, Scott Lowe, a man much smarter then I says it best:

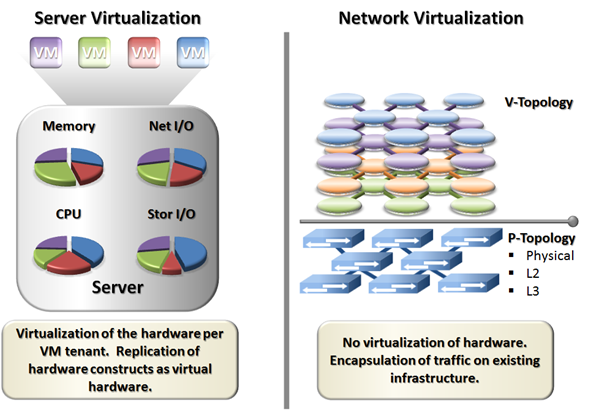

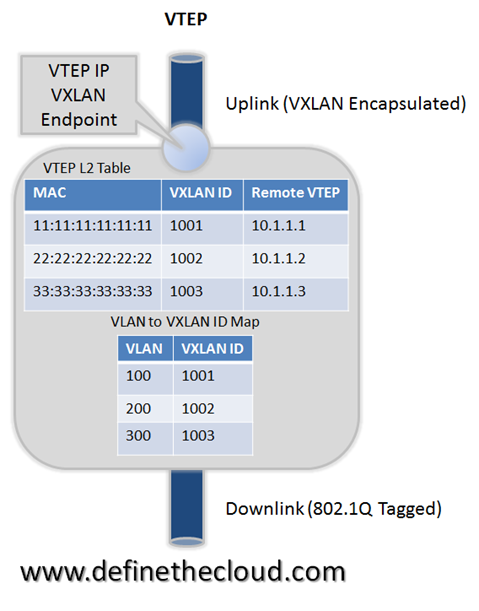

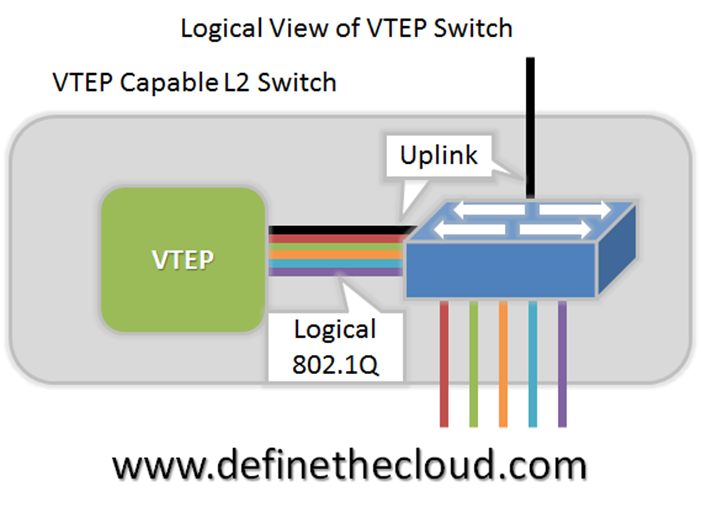

The issue is that these two concepts are implemented in a very different fashion. Where server virtualization provides full visibility and partitioning of the underlying hardware, network virtualization simply provides a packet encapsulation technique for frames on the wire. The diagram below better illustrates our two fruits: apples and oranges.

As the diagram illustrates we are not working with equivalent approaches. Network virtualization would require partitioning of switch CPU, TCAM, ASIC forwarding, bandwidth etc. to be a true apples-to-apples comparison. Instead it provides a simple wrapper to encapsulate traffic on the underlying Layer 3 infrastructure. These are two very different virtualization approaches.

Brad makes his next giant leap in the “What is the Network section.†Here he makes the assumption that the network consists of only virtual workloads “The “network†we want to virtualize is the complete L2-L7 services viewed by the virtual machines†and the rest of his blog focuses there. This is fine for those data center environments that are 100% virtualized including servers, services and WAN connectivity and use server virtualization for all of those purposes. Those environments must also lack PaaS and SaaS systems that aren’t built on virtual servers as those are also non-applicable to the remaining discussion. So anyone in those environments described will benefit from the discussion, anyone <crickets>.

So Brad and, presumably VMware/Nicira (since network virtualization is their term), define the goal as taking “all of the network services, features, and configuration necessary to provision the application’s virtual network (VLANs, VRFs, Firewall rules, Load Balancer pools & VIPs, IPAM, Routing, isolation, multi-tenancy, etc.) – take all of those features, decouple it from the physical network, and move it into a virtualization software layer for the express purpose of automation.†So if your looking to build 100% virtualized server environments with no plans to advance up the stack into PaaS, etc. it seems you have found your Huckleberry.

What we really need is not a virtualized network overlay running on top of an L3 infrastructure with no communication or correlation between the two. What we really need is something another guy much smarter than me (Greg Ferro) described:

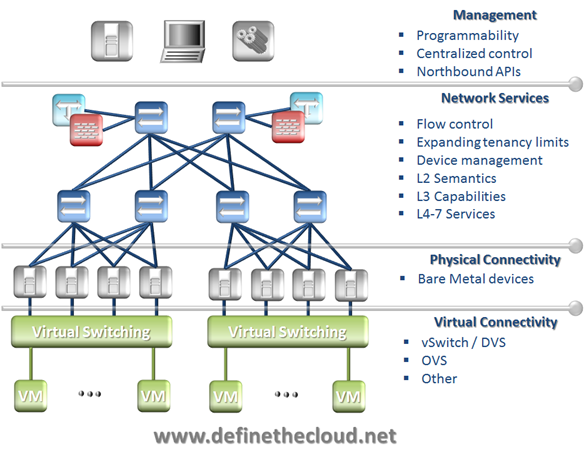

Abstraction, independence and isolation, that’s the key to moving the network forward. This is not provided by network virtualization. Network virtualization is a coat of paint on the existing building. Further more that coat of paint is applied without stripping, priming, or removing that floral wall paper your grandmother loved. The diagram below is how I think of it.

With a network virtualization solution you’re placing your applications on a house of cards built on a non-isolated infrastructure of legacy design and thinking. Without modifying the underlying infrastructure, network virtualization solutions are only as good as the original foundation. Of course you could replace the data center network with a non-blocking fabric and apply QoS consistently across that underlying fabric (most likely manually) as Brad Hedlund suggests below.

If this is the route you take, to rebuild the foundation before applying network virtualization paint, is network virtualization still the color you want? If a refresh and reconfigure is required anyway, is this the best method for doing so?

The network has become complex and unmanageable due to things far older than VMware and server virtualization. We’ve clung to device centric CLI configuration and the realm of keyboard wizards. Furthermore we’ve bastardized originally abstracted network constructs such as VLAN, VRF, addressing, routing, and security tying them together and creating a Frankenstein of a data center network. Are we surprised the villagers are coming with torches and pitch forks?

So overall I agree with Brad, the network needs to be fixed. We just differ on the solution, I’d like to see more than a coat of paint. Put lipstick on a pig and all you get is a pretty pig.