A-Game:

When I discuss my A-Game it’s my go to hardware vendor for a specific data center component. For example I have an A-Game platform for:

- Storage

- SAN

- LAN (access Layer LAN specifically, you don’t want me near your aggregation, core or WAN)

- Servers and Blades (traditionally this has been one vendor for both)

As this post is in regards to my server A-Game I’ll leave the rest undefined for now and may blog about them later.

Over the last 4 years I’ve worked in some capacity or another as an independent customer advisor or consultant with several vendor options to choose from. This has been either with a VAR or strategic consulting firm such as www.fireflycom.net.) In both cases there is typically a company lean one way or another but my role has given me the flexibility to choose the right fit for the customer not my company or the vendors which is what I personally strive to do. I’m not willing to stake my own integrity on what a given company wants to push today. I’ve written about my thoughts on objectivity in a previous blog (http://www.definethecloud.net/?p=112.)

Another rule in regards to my A-Game is that it’s not a rule, it’s a launching point. I start with a specific hardware set in mind in order to visualize the customer need and analyze the best way to meet that need. If I hit a point of contention that negates the use of my A-Game I’ll fluidly adapt my thinking and proposed architecture to one that better fits the customer. These points of contention may be either technical, political, or business related:

- Technical: My A-Game doesn’t fit the customers requirement due to some technical factor, support, feature, etc.

- Political: My A-Game doesn’t fit the customer because they don’t want Vendor X (previous bad experience, hype, understanding, etc.)

- Business: My A-Game isn’t on an approved vendor list, or something similar.

If I hit one of these roadblocks I’ll shift my vendor strategy for the particular engagement without a second thought. The exception to this is if one of these roadblocks isn’t actually a roadblock and my A-Game definitely provides the best fit for the customer I’ll work with the customer to analyze actual requirements and attempt to find ways around the roadblock.

Basically my A-Game is a product or product line that I’ve personally tested, worked with and trust above the others that is my starting point for any consultative engagement.

A quick read through my blog page or a jump through my links will show that I work closely with Cisco products and it would be easy to assume that I am therefore inherently skewed towards Cisco. In reality the opposite is true, over the last few years I’ve had the privilege to select my job(s) and role(s) based on the products I want to work with.

My sorted UCS history:

As anyone who’s worked with me can attest to I’m not one to pull punches, feign friendliness, or accept what you try and sell me based on a flashy slide deck or eloquent rhetoric. If you’re presenting to me don’t expect me to swallow anything without proof, don’t expect easy questions, and don’t show up if you can’t put the hardware in my hands to cash the checks your slides write. When I’m presenting to you, I expect and encourage the same.

Prior to my exposure to UCS I worked with both IBM and HP servers and blades. I am an IBM Certified Blade Expert (although dated at this point.) IBM was in fact my A-Game server and blade vendor. This had a lot to do with the technology of the IBM systems as well as the overall product portfolio IBM brought with it. That being said I’d also be willing to concede that HP blades have moved above IBM’s in technology and innovation, although IBM’s MAX5 is one way IBM is looking to change that.

When I first heard about Cisco’s launch into the server market I thought, and hoped, it was a joke. I expected some Frankenstein of a product where I’d place server blades in Nexus or Catalyst chassis. At the time I was working heavily with the Cisco Nexus product line primarily 5000, 2000, and 1000v. I was very impressed with these products, the innovation involved, and the overall benefit they’d bring to the customer. All the love in the world for the Nexus line couldn’t overcome my feeling that there was no way Cisco could successfully move into servers.

Early in 2009 my resume was submitted among several others by my company to Learning at Cisco and the business unit in charge of UCS. This was part of an application process for learning partners in order to be invited to the initial external Train The Trainer (TTT) and participate in training UCS to: Cisco, partners, and customers worldwide. Myself and two other engineer/trainers (Dave Alexander and Fabricio Grimaldi) were selected from my company to attend. The first interesting thing about the process was that the three of us were selected above CCIEs, 2x CCIEs and more experienced instructors from our company based on our server backgrounds. It seemed Cisco really was looking to push servers not some network adaptation.

During the TTT I remained very skeptical. The product looked interesting but not ‘game-changing.’ The user interfaces were lacking and definitely showed their Alpha and Beta colors. Hardware didn’t always behave as expected and the real business/technological benefits of the product didn’t shine through. That being said remember that at this point the product was months away from launch and this was a very Beta version of hardware/software we were working with. Regardless of the underlying reasons I walked away from the TTT feeling fully underwhelmed.

I spent the time on my flight back to the East Coast from San Jose looking through my notes and thinking about the system and components. It definitely had some interesting concepts but I didn’t feel it was a platform I would stake my name to at this point.

Over the next couple of months Fabricio Grimaldi and I assisted Dave Alexander (http://theunifiedcomputingblog.com) in developing the UCS Implementation certification course. Through this process I spent a lot of time digging into the underlying architecture, relating it back to my server admin days and white boarding the concepts and connections in my home office. Additionally I got more and more time on the equipment to ‘kick-the-tires.’ During this process Dave myself and Fabrico began instructing an internal Cisco course known as UCS Bootcamp. The course was designed for Cisco engineers from both pre-sales and post-sales roles and focused specifically on the technology as a product deep dive.

It was over these months having discussions on the product, wrapping my head around the technology, and developing training around the components that the lock cylinders in my brain started to click into place and finally the key turned: UCS changes the game for server architecture, the skeptic had become a convert.

UCS the game changer:

The term game changer ge

ts thrown around all willy nilly like in this industry. Every minor advancement is touted by its owner as a ‘Game Changer.’ In reality ‘Game Changers’ are few and far between. In order to qualify you must actually shift the status quo, not just improve upon it. To use vacuums as an example, if your vacuum sucks harder it just sucks harder, it doesn’t change the game. A Dyson vacuum may vacuum better than anyone else’s but Roomba (http://www.irobot.com/uk/home_robots.cfm) is the one that changed the game. With Dyson I still have to push the damn thing around the living room, with Roomba I watch it go.

In order to understand why UCS changes the game rather than improving upon it, you first need to define UCS:

UCS is NOT a blade system it is a server architecture

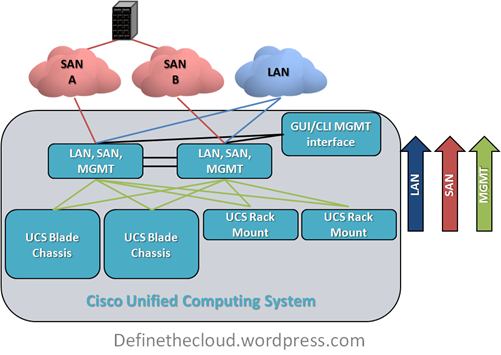

Cisco’s unified Computing System (UCS) is not all about blades, it is about rack mount servers, blade servers, and management being used as a flexible pool of computing resources. Because of this it has been likened to an x86-64 based mainframe system.

UCS takes a different approach to the original blade system designs. It’s not a solution for data center point problems (power, cooling, management, space, cabling) in isolation it’s a redefinition of the way we do computing.

‘Instead of asking how can I improve upon current architectures’

Cisco/Nuova asked

‘What’s the purpose of the server and what’s the best way to accomplish that goal.’

Many of the ideas UCS utilizes have been tried and implemented in other products before: Unified I/O, single point of management, modular scalability, etc., but never all in one cohesive design.

There are two major features of UCS that I call ‘the cake’ and three more that are really icing. The two cake features are the reason UCS is my A-Game and the others just further separate it.

- Unified Management

- Workload Portability

Unified Management:

Blade architectures are traditionally built with space savings as a primary concern. In order to do this a blade chassis is built with a shared LAN, SAN, power, cooling infrastructure and an onboard management system to control server hardware access, fan speeds, power levels, etc. M. Sean McGee describes this much better than I could hope to in his article The “Mini-Rack†approach to Blade Design (http://bit.ly/bYJVJM.) This traditional design saves space and can also save on overall power, cooling, and cabling but causes pain points in management among other considerations.

UCS was built from the ground up with a different approach, and Cisco has the advantage of zero legacy server investment which allows them to execute on this. The UCS approach is:

- Top-of-Rack networking should be Top-Of-Rack not repeated in each blade chassis.

- Management should encompass the entire architecture not just a single chassis.

- Blades are only 40% of the data center server picture, rack mounts should not be excluded.

The key difference here is that all management of the LAN, SAN, server hardware, and chassis itself is pulled into the access layer and performed on the UCS Fabric Interconnect which provides all of the switching and management functionality for the system. The system itself was built from the ground up with this in mind, and as such this is designed into each hardware component. Other systems that provide a single point of management do so by layering on additional hardware and software components in order to manage underlying component managers. Additionally these other systems only manage blade enclosures while UCS is designed to manage both blades and traditional rack mounts from one point. This functionality will be available in firmware by the end of CY10.

To put this in perspective Cisco UCS provides a very similar rapid repeatable physical server deployment model to the virtual server deployment model VMware provides. Through the use of granular Role Based Access Control (RBAC) UCS ensures that organizational changes are not required, while at the same time providing the flexibility to streamline people and process if desired.

Workload Portability:

Workload portability has significant benefits within the data center, the concept itself is usually described as ‘statelessness.’ If you’re familiar with VMware this is the same flexibility VMware provides for virtual machines, i.e. there is no tie to the underlying hardware. One of the key benefits of UCS is the ability to apply this type of statelessness at the hardware level. This removes the tie of the server or workload to the blade or slot it resides in, and provides major flexibility to maintenance and repair cycles, as well as deployment times for new or expanding applications.

Within UCS all management is performed on the Fabric Interconnect through the UCS Manager GUI or CLI. This includes any network configuration for blades, chassis, or rack-mounts, all server configuration including firmware BIOS, NIC/HBA and boot order among other things. The actual blade is configured through an object called a ‘service profile’.’ This profile defines the server on the network as well as the way in which the server hardware operates (BIOS/Firmware, etc.)

All of the settings contained within a server profile are traditionally configured, managed and stored in hardware on a server. Because these are now defined in a configuration file the underlying hardware tie is stripped away and a server workload can be quickly moved from one physical blade to another without requiring changes in the networks, or storage arrays. This decreases maintenance windows and speeds roll-out.

Within UCS, Service Profiles can be created using templates or pools which is unique to UCS. This further increases the benefits of service profiles and decreases the risk inherent with multiple configuration points, and case-by-case deployment models.

UCS Profiles and Templates

These two features and their real world applications and value are what place UCS in my A-Game slot. These features will provide benefits to ANY server deployment model, and are unique to UCS. While subcomponents exist within other vendors they are not:

- Designed into the hardware

- Fully integrated without the need for additional hardware and software and licensing

- As robust

Icing on the cake:

- Dual socket server memory scalability and flexibility (Cisco memory expander technology)

- Integration with VMware and advanced networking for virtual switching

- Unified fabric (I/O consolidation)

Each of these feature also offer real world benefits but the real heart of UCS is the Unified management and server statelessness. You can find more information on these other features through var

ious blogs and Cisco documentation.

When is it time for my B-Game?:

By now you should have an understanding as to why I chose UCS as my A-Game (not to say you necessarily agree, but that you understand my approach.) So what are the factors that move me towards my B-Game? I will list three considerations and the qualifying question that would finalize a decision to position a B-Game system:

| Infiniband | If the customer is using Infiniband for networking UCS does not currently support it. I would first assess whether there was an actual requirement for Infiniband or if it was just the best option at the time of last refresh. If Infiniband is required I would move to another solution. |

| Non-Intel Processors | Requirement for non-Intel processors would steer me towards another vendor as UCS does not currently support non-Intel. As above I would first verify whether non-Intel was a requirement or a choice. |

| Requirement for chassis based storage | If a customer had a requirement for chassis based storage there is no current Cisco offering for this within UCS. This is however very much a corner case and only a configuration I would typically recommend for single chassis deployments with little need to scale. In-chassis storage becomes a bottle neck rather than a benefit in multi-chassis configurations. |

While there are other reasons I may have to look at another product for a given engagement they are typically few and far between. UCS has the right combination of entry point and scalability to hit a great majority of server deployments. Additionally as a newer architecture there is no concern with the architectural refresh cycle of other vendors. As other blade solutions continue to age there will be an increased risk to the customer in regards to forward compatibility.

Summary:

UCS is not the only server or blade system on the market, but it is the only complete server architecture. Call it consolidated, unified, virtualized, whatever but there isn’t another platform to combine rack-mounts and blades under a single architecture with a single management window and tools for rapid deployment. The current offering is appropriate for a great majority of deployments and will continue to get better.

If your considering a server refresh or new deployment it would be a mistake not to take a good look at the UCS architecture. Even if it’s not what you choose it may give you some ideas as to how you want to move forward, or features to ask your chosen vendor for.

Even if you never buy a UCS server you can still thank Cisco for launching UCS. The lower pricing you’re getting today, and the features being put in place on other vendors product lines are being driven by a new server player in the market, and the innovation they launched with.

Comments, concerns, complaints always appreciated!

This post will assist the internet viewers for setting up new weblog

or even a blog from start to end.

Hola Bea!!!Estos dÃÂas sin vosotros se me ha hecho eterno!!!Fantastico este bundt!, debe heaber algun secreto para que quede tant fantastico, no?Por favor me podrias explicar que són los candy melts? es chocolate o caramelo?Mglòria de Gourmenderies

It’s remarkable designed for me to have a web site, which is valuable in favor of my know-how.

thanks admin

auxc97